Researchers in Australia are developing smart glasses for blind people, using a technology called “acoustic touch” to turn images into sounds. Initial experiments suggest that this wearable spatial audio technology could help people who are blind or have significantly impaired vision to locate nearby objects.

Recent improvements in augmented reality, practical wearable camera technology and deep learning-based computer vision are accelerating the development of smart glasses as a viable and multi-functional assistive technology for those who are blind or have low vision. Such smart glasses incorporate cameras, GPS systems, a microphone and inertial measurement and depth sensing units to deliver functions such as navigation, voice recognition control, or rendering objects, text or surroundings as computer-synthesized speech.

Howe Yuan Zhu and colleagues at the University of Technology Sydney (UTS) and the University of Sydney investigated the addition of acoustic touch to smart glasses, an approach that uses head scanning and the activation of auditory icons as objects appear within a defined field-of-view (FOV).

Writing in PLOS ONE, the researchers explain that acoustic touch offers several advantages over existing approaches, including ease of integration with smart glasses technology and more intuitive use than computer-synthesized speech. Such systems may also require less training for users to become proficient.

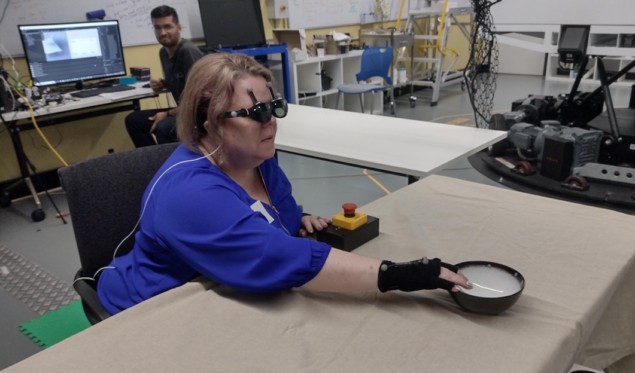

Working with ARIA Research of Sydney (which recently won Australian Technology Company of the Year for its pioneering vision-tech innovations), the team created a foveated audio device (FAD) to test these assumptions on seven volunteers with no or low vision, plus seven sighted blindfolded participants. The FAD comprises a smartphone and the NREAL augmented-reality glasses, to which the team attached motion-capture reflective markers to enable tracking of head movements.

The FAD performs object recognition and determines the object’s distance using the stereo cameras on the glasses. It then assigns appropriate auditory icons to the objects, such as a page-turning sound for book, for example. When a wearer swivels their head, the repetition rate of the auditory icons changes according to the item’s position within the auditory FOV.

The volunteers took part in both seated and standing exercises. The seated task required them to use various methods to search for and handle everyday items, including a book, bottle, bowl or cup, positioned on one or multiple tables. This task measured their ability to detect an item, recognize a sound and memorize the position of the item.

The researchers designed this task to compare the FAD performance with two conventional speech cues: clock-face verbal directions; and the sequential playing of auditory icons from speakers co-located with each item. They found that for blind or low-vision participants, performance using the FAD was comparable to the two idealized conditions. The blindfolded sighted group, however, performed worse when using the FAD.

Brain implant enables blind woman to see simple shapes

The standing reaching task required participants to use the FAD to search and reach for a target item situated among multiple distractor items. Participants were asked to find objects placed on three tables that were surrounded by four bottles of different shapes. This task primarily assessed the functional performance of the system, and human behaviour when using full-body movement during searching.

“This year, we’ve been heavily exploring using the auditory soundscape to support various complex tasks,” Zhu tells Physics World. “In particular, we have explored using different types of spatialized sounds to guide people during navigation and supporting sporting activities, specifically table tennis. Next year, we hope to continue expanding these areas and conduct studies in real-world settings.”